AI-Powered Therapy Apps: Can a Machine Really Heal the Human Mind?

The notification arrives at 2:47 AM: “I noticed you’ve been awake. Would you like to talk?”

For millions of people worldwide, this message isn’t from a concerned friend—it’s from an AI therapist. Welcome to the frontier of mental healthcare, where algorithms are stepping into roles traditionally reserved for human empathy, and the results are as promising as they are controversial.

The Silent Crisis That AI Is Trying to Solve

Right now, approximately 1 billion people globally are living with mental health disorders. Yet only a fraction receive adequate care. The World Health Organization reports a stark reality: in many countries, there’s fewer than one mental health professional per 100,000 people. The wait times stretch into months. The costs climb into thousands. And the stigma? It keeps millions suffering in silence.

Enter AI therapy apps—digital platforms that promise mental health support at 3 AM or 3 PM, for $20 a month instead of $200 per session, with zero judgment and immediate availability.

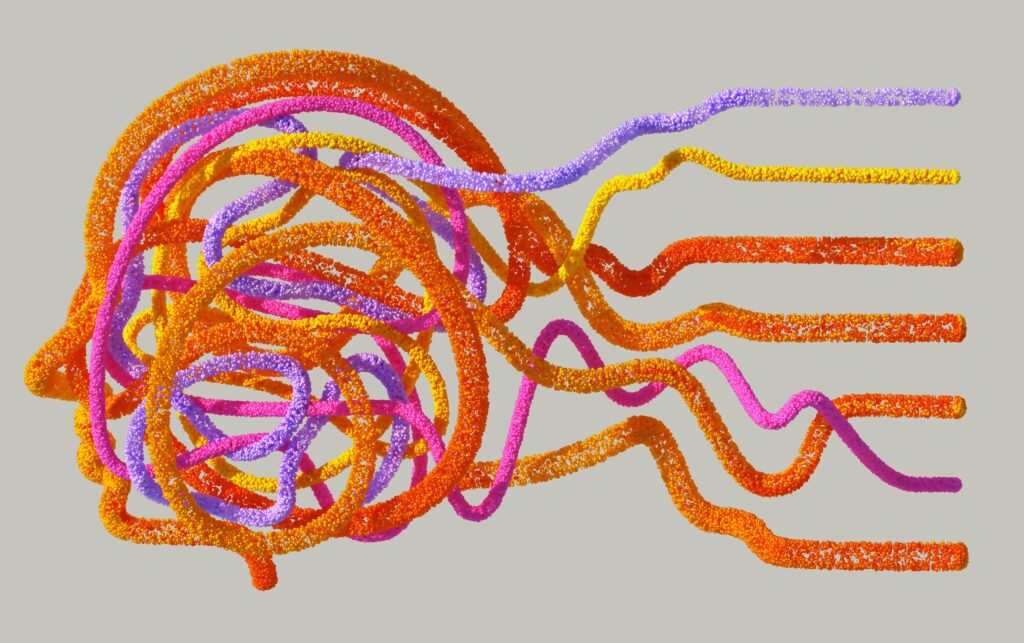

But here’s the question that haunts researchers, clinicians, and users alike: Can code and algorithms truly comprehend the chaos of the human mind?

What Exactly Is AI Therapy?

AI therapy refers to mental health interventions delivered through artificial intelligence systems, typically accessible via smartphone apps or web platforms. These aren’t simple chatbots spitting out pre-programmed responses. Modern AI therapy apps employ sophisticated technologies that attempt to understand, respond to, and even predict human emotional states.

Think of it as having a therapist in your pocket—one that never sleeps, never judges, and processes your words through millions of data points to offer personalized guidance.

Popular platforms like Woebot, Wysa, Youper, and Replika have collectively reached tens of millions of users. But the technology behind them is what makes this revolution possible.

The Technology Behind the Curtain: How AI Therapy Actually Works

1. Cognitive Behavioral Therapy (CBT) Frameworks

Most AI therapy apps are built on evidence-based therapeutic approaches, particularly CBT—the gold standard in treating anxiety, depression, and other mental health conditions.

CBT focuses on identifying and changing negative thought patterns. Here’s where AI excels: it can guide users through structured exercises, track mood patterns over time, and deliver CBT techniques with remarkable consistency.

A 2021 study published in the Journal of Medical Internet Research found that app-based CBT interventions showed significant reductions in depression and anxiety symptoms, with effect sizes comparable to traditional face-to-face therapy for mild to moderate cases.

AI therapists use decision trees and algorithms to:

- Identify cognitive distortions in your language (“I always fail” becomes a target for intervention)

- Suggest reframing techniques (“What evidence contradicts that thought?”)

- Track your progress through validated psychological assessments

2. Natural Language Processing (NLP): Teaching Machines to “Listen”

Natural Language Processing enables AI to decode not just what you say, but potentially how you feel when you say it.

Modern NLP systems analyze:

- Sentiment: Are you expressing positive, negative, or neutral emotions?

- Linguistic patterns: Increased use of absolutist words (“always,” “never”) correlates with depression

- Syntax and grammar: Studies show depressive states often alter writing patterns

For example, when you type “I guess I’m fine,” the AI doesn’t just read those words—it detects the hedge (“I guess”), compares it to your baseline communication style, and might probe deeper: “You sound uncertain. What’s making you feel that way?”

3. Large Language Models (LLMs): The Game Changer

The newest frontier involves LLMs—the same technology powering conversational AI like ChatGPT. These models are trained on vast datasets encompassing human conversation, psychological literature, and therapeutic dialogue.

What makes LLMs revolutionary:

- Contextual understanding: They remember your conversation history and reference previous sessions

- Nuanced responses: Unlike rigid chatbots, LLMs generate human-like, contextually appropriate replies

- Adaptive learning: They adjust their communication style based on what resonates with you

However, it’s crucial to note that LLMs in mental health apps undergo additional training and safety constraints. They’re not simply general-purpose chatbots repurposed for therapy—responsible developers implement guardrails to prevent harmful advice and ensure crisis protocols activate when needed.

4. Machine Learning and Pattern Recognition

Behind the scenes, machine learning algorithms are constantly analyzing data:

- When do your mood dips occur? (Time of day, day of week)

- What triggers correlate with anxiety spikes?

- Which interventions have worked best for you historically?

This enables predictive support. Your AI therapist might notice you consistently struggle on Sunday evenings and proactively check in, or suggest coping strategies before a known stressor event.

The Scientific Backing: What Does Research Actually Say?

The evidence base for AI therapy is growing, though it’s important to view it with appropriate nuance.

The Promising Evidence

Effectiveness Studies: Multiple randomized controlled trials have demonstrated positive outcomes:

- A 2020 meta-analysis in JMIR Mental Health reviewing 12 studies found that chatbot interventions significantly reduced symptoms of depression and anxiety

- Research on Woebot specifically showed it reduced depression symptoms in young adults within two weeks

- A 2022 study found that users of AI mental health apps reported feeling less lonely and more supported

Accessibility Impact: Research from Stanford University highlighted that AI therapy apps serve populations who might never access traditional therapy—people in rural areas, those with mobility limitations, individuals who cannot afford conventional care, and those who face cultural stigma around mental health treatment.

Consistency Advantage: Unlike human therapists who may have off days or vary in treatment quality, AI delivers evidence-based interventions with perfect consistency. Every user receives the same quality of CBT technique implementation.

The Critical Limitations

But here’s where intellectual honesty demands we pump the brakes.

1. Depth of Understanding

AI doesn’t “understand” your trauma the way a human therapist does. It recognizes patterns and responds based on training data, but it lacks consciousness, genuine empathy, and the intuitive leaps human therapists make.

Dr. Tessa West, a psychology professor at New York University, notes that therapy is fundamentally relational. The therapeutic alliance—that bond between client and therapist—accounts for roughly 30% of positive outcomes in therapy, regardless of technique used. Can you form that bond with an algorithm?

Research here is mixed. Some users report feeling surprisingly connected to their AI therapists. Others find the experience hollow. Individual differences matter enormously.

2. Complex Cases Require Human Expertise

AI therapy apps are generally designed for mild to moderate mental health concerns. They are not appropriate for:

- Severe depression with suicidal ideation

- Complex trauma and PTSD

- Personality disorders

- Psychotic disorders

- Situations requiring medication management

Most responsible AI therapy platforms explicitly state these limitations and include crisis resources for human support. If they don’t, that’s a massive red flag.

3. Privacy and Data Security Concerns

When you share your deepest struggles with an app, where does that data go? A 2023 investigation by Mozilla found that several popular mental health apps had concerning data practices, including sharing user information with advertisers.

The intimacy of therapeutic disclosure makes data security paramount. Users must scrutinize privacy policies and understand that current regulations (like HIPAA in the US) don’t cover all mental health apps.

4. Bias in Training Data

AI systems are only as good as their training data. If that data predominantly represents certain demographics, the AI may be less effective—or even harmful—for others.

Concerns include cultural insensitivity, inability to understand diverse communication styles, and perpetuation of existing healthcare biases.

5. Lack of Accountability

If a human therapist provides harmful advice, there are licensing boards, ethical guidelines, and legal recourse. The accountability structures for AI therapy remain murky. Who is responsible when an AI chatbot fails to recognize a crisis situation?

The Verdict: Partner, Not Replacement

After examining the evidence, a picture emerges: AI therapy apps are powerful tools with genuine utility, but they exist on a spectrum of care rather than as wholesale replacements for human therapists.

AI therapy excels when:

- You need immediate support between human therapy sessions

- You’re dealing with mild to moderate anxiety or depression

- You want to learn and practice CBT skills

- Cost or access barriers prevent traditional therapy

- You’re exploring mental health support for the first time and want a low-stakes entry point

AI therapy falls short when:

- You need deep, nuanced understanding of complex trauma

- Your condition requires medication evaluation

- You’re in crisis

- The therapeutic relationship itself is a crucial part of healing

- Cultural or individual factors require human adaptability

The Future: Hybrid Models and Ethical Evolution

The most promising path forward involves hybrid models—AI handling the scalable, consistent delivery of evidence-based techniques, while human therapists focus on complex cases, provide supervision, and offer the irreplaceable human connection.

Some innovative programs already operate this way: AI apps provide daily support and collect data, which human therapists review during periodic sessions, creating continuity of care previously impossible.

As the technology evolves, so must our ethical frameworks. We need:

- Robust regulatory standards specific to AI mental health tools

- Mandatory transparency about AI capabilities and limitations

- Rigorous testing for bias and cultural competence

- Clear data privacy protections

- Ongoing research comparing AI, human, and hybrid approaches

The Human Element in Digital Care

Perhaps the most profound question isn’t whether AI can heal the human mind, but whether healing requires humanity.

The answer, based on current evidence, is both yes and no. For some people, in some contexts, with certain conditions, AI provides genuine relief and support. For others, the digital interface creates an uncanny valley—almost human, but not quite, leaving them feeling more isolated.

What’s undeniable is this: AI therapy has democratized access to mental health support in unprecedented ways. Millions who suffered in silence now have somewhere to turn. That’s not nothing—that’s revolutionary.

But as we embrace these digital helpers, we must resist the temptation to see them as complete solutions. Mental health is profoundly, irreducibly human. Our pain exists in the context of relationships, society, biology, and individual experience.

The most ethical and effective future isn’t human versus machine—it’s human and machine, working in concert, each contributing their unique strengths to the urgent work of healing minds.

Because ultimately, whether delivered by neurons or neural networks, what matters most is this: Does it help? Does it ease suffering? Does it move someone from hopelessness toward possibility?

For millions using these apps, the answer is increasingly yes. And that’s a start worth building on.

Resources:

- If you’re in crisis, contact the 988 Suicide and Crisis Lifeline (US) or your local emergency services

- National Alliance on Mental Illness (NAMI): nami.org

- Mental Health America: mhanational.org

Have you used an AI therapy app? What was your experience? Share your thoughts in the comments below.